I save the front view of the model as RGB and find the landmarks of the face. But I need to create these landmarks on the 3d model. I need a link between the RGB pixel and the model voxel. Thank you.

Do you want to mark points on a surface mesh? Why don’t you do that directly in Slicer?

My goal is to find the face landmarks in the 3d head model in Slicer.

I don’t know how to do this in Slicer. Maybe curvature can be used.

But the Google Mediapipe code captures the face landmarks in RGB image very well.

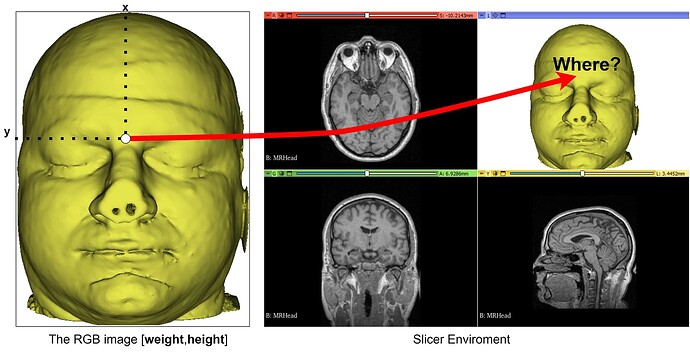

Because of this, I obtained an RGB image from the model in Slicer and found landmarks by Mediapipe.

Now I want to find out which point on the 3d model surface this landmark information in 2d corresponds to.

The figure has a face landmark (F3). I want to find the point where this point corresponds on the surface. I think I can use trimesh’s rail function for this. I’m trying to find the intersection point on the surface by starting F3.

I need the mesh information of the model. I can get the nodes.

ModelNode = slicer.mrmlScene.GetFirstNodeByName('Model')

Vertices = numpy_support.vtk_to_numpy(ModelNode.GetPolyData().GetPoints().GetData())

But I can’t get the faces information.

Faces = ModelNode.GetMesh().GetCellData().GetArray()

Thank you.

That’s good, you can then convert the 2D display coordinates to 3D coordinates using point picking as shown in this code snippet.

Thanks Lassoan. This code worked for me. Only one problem remained. How can I find the maximum values of horizontal and vertical variables in the command below? So how can I get the image size?

modelDisplayableManager = threeDViewWidget.threeDView().displayableManagerByClassName("vtkMRMLModelDisplayableManager")

modelDisplayableManager.Pick( horizontal, vertical )

If you have the image you know its size, too.

I get the RGB image by Screen Capture the model image in threeDView. When I make Screen Capture, the original data size and the size I captured are different. I want to eliminate this difference.

In other words, I want to save an RGB image from the anterior view of the 3d model, find the landmark of the face, and create the landmark I found on the model.

I know the RGB image dimensions and the corresponding point position. But I don’t know how to find its place in the model with this information?

Using the code below, I can put a landmark at the [i,j] point of the model. However, I cannot connect [x,y] in the picture to [i,j] in the model. Thank you for your help

if modelDisplayableManager.Pick(i,j) and modelDisplayableManager.GetPickedNodeID():

rasPosition = modelDisplayableManager.GetPickedRAS()

slicer.modules.markups.logic().AddControlPoint(rasPosition[0],rasPosition[1],rasPosition[2]);

You can capture the rendered image from the 3D view as shown in Python examples in the script repository.

I can capture anterior image of the 3d model. However, when I put a landmark in the x,y coordinate of this image, does it put the 3d model in the right place?

Capture image sizes vary depending on whether the program is full screen or not. this leads to inconsistency.

Problem solved. It turns out that the RGB dimensions I saved and the dimensions of the 3d model were exactly the same. Dear Andrew, thank you for your valuable guidance.

The code below got the job done.

threeDView = slicer.app.layoutManager().threeDWidget(0).threeDView()

modelDisplayableManager = threeDView.displayableManagerByClassName("vtkMRMLModelDisplayableManager")

#threeDView.resetFocalPoint() # reset the 3D view cube size and center it

#threeDView.resetCamera() # reset camera zoom

threeDView.rotateToViewAxis(3) # look from anterior direction

renderWindow = threeDView.renderWindow()

renderWindow.SetAlphaBitPlanes(1)

wti = vtk.vtkWindowToImageFilter()

wti.SetInputBufferTypeToRGBA()

wti.SetInput(renderWindow)

writer = vtk.vtkPNGWriter()

writer.SetFileName("C:/Users/Administrator/Desktop/Slicer/face.png")

writer.SetInputConnection(wti.GetOutputPort())

writer.Write()

FaceXY = [[957,659]] # for example

for i in range(len(FaceXY)):

if modelDisplayableManager.Pick(FaceXY[i][0], FaceXY[i][1]) and modelDisplayableManager.GetPickedNodeID():

rasPosition = modelDisplayableManager.GetPickedRAS()

slicer.modules.markups.logic().AddControlPoint(rasPosition[0],rasPosition[1],rasPosition[2]);