I know 3D slicer can merge two volume/slice nodes from separate CT data but is it possible to merge an PLY/STL loaded model node with a CT volume/slice node?

Yes, you can load PLY/STL models and display them with the CT. But because those formats don’t define a reference space they may not align. See this post for example.

Not quite what I was referring to. For example, we obtain 2 nodes as such

ply_node = slicer.util.getNode(‘model_name’)

ct_node = slicer.util.getNode(‘ct_name’)

merged_node = merge(ply_node, ct_node)

such that merged_node contains the image/volume data from both nodes

How do you envision combining models and volumes? Would you like to end up with a model or a volume?

You can import a model into a segmentation and then use Segment Editor’s Mask volume effect (provided by SegmentEditorExtraEffects extension) to paste the segment into the volume.

hey, i found have this from the nightly script repository

meshVolumeNode = getNode(mesh_model)

seg = slicer.mrmlScene.AddNewNodeByClass(‘vtkMRMLSegmentationNode’)

slicer.modules.segmentations.logic().ImportModelToSegmentationNode(meshVolumeNode, seg)

seg.CreateClosedSurfaceRepresentation()

slicer.mrmlScene.RemoveNode(meshVolumeNode)

once i have a segment volume node, how can i merge it with a CT volume node?

also what is the function SetAndObserveImageData used for? the VTK documentation doesn’t explain much.

I’m working on an extension that builds around panoramic reconstruction which essentially straightens a volume: Slice rotation in viewport when using SetSliceToRASByNTP in python - #2 by lassoan

but i also want this reconstruction to incorporate mesh models so im trying to merge volumes - im not sure if this is the correct approach to this.

thanks!

OK, this explains everything.

You can create 3D panoramic reconstructions using this recently added module: How to implement CPR (curved planar reconstruction) from centerline? - #3 by lassoan

To display models in the same way, you can import the model to segmentation node, from there export as a labelmap volume node, and then apply curved planar reconstruction to the labelmap volume.

An alternative approach would be to create a grid transform from the transformed corner points of the slices, but this would require a little bit of Python scripting. This method would be particularly useful for transforming sparse point sets (landmark points, lines, curves), which cannot be well represented by image volumes. It would be nice if you could work on this we can help you to get started.

Woa this is really neat! I didn’t realize this feature had been implemented. Ultimately I’m trying to do implant planning so having the an stl/ply mesh representation of the implant shown in the pan tomographic representation would be really useful.

Can I merge segmentation with ct node in order to use it as an input volume for CPR?

Or can multiple volume node inputs be implemented?

The Mask Volume works beautifully with the CPR extension. One caveat I have though is that when I use mask volume, the segment color turns gray once projected onto the CT volume. so when I use the CPR extension to transform the gray color of the segment blends against the gray values of the CT volume.

any workaround for that?

In mask volume effect, you can pick the gray color intensity. Probably you want to set a high value to indicate that the implant is very dense material.

hey,

i saw on the nightly script repository an example on how to access scripted modules. I want to access the mask volume effect, i am trying this

slicer.modules.segmenteditoreffect.widgetRepresentation().maskVolumeWithSegment()

but i get that module ‘modules’ has no attribute ‘segmenteditoreffect’

You can learn from these examples how to use Segment Editor effects from scripts: https://www.slicer.org/wiki/Documentation/Nightly/ScriptRepository#How_to_run_segment_editor_effects_from_a_script

Hey,

I am working on an extension that would greatly benefit from being able to accomplish this - transforming any model object outline when straightening out the volume along the path. Any pointers where to begin?

I implemented the mask workaround you suggested but it is insufficient, as you mentioned, in visualizing sparse point sets like model outlines, points and lines.

I’ve already implemented this in Curved Planar Reformat module. It can now create a transform that you can use to transform implants, landmark points, models, etc. between the original and straightened volume.

Hey,

Amazing piece of code from the Curve Planar Reformat module. I managed to implement it and it works well.

I have arch form intraoral models and those transform really well. when i add a cylindrical implant type object however, it not transformed how i expected. this happens w/ simple cylinder of only a few vertices, as well as higher density cylinder-type meshes

I am not quite sure why some objects do not transform as well. you can see that the implant is centered over the curve. any thoughts?

I set transformSpacingFactor = 0.1 in your code, i can’t tell if it improved things. should i made the value even smaller?

Thanks!

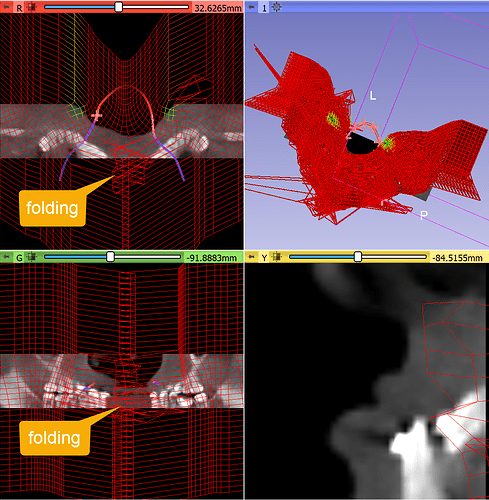

If the slice size is too large or curve resolution is too fine then in some regions you can have transform that maps the same point into different positions (the displacement field folds into itself). In these regions the transforms in not invertible.

To reduce these ambiguously mapped regions, decrease Slice size. If necessary Curve resolution can be slightly increased as well (it controls how densely the curve is sampled to generate the displacement field, if samples are farther from each other then it may reduce chance of contradicting samples).

You can quickly validate the transform, by going to Transforms module and in Display/Visualization section check all 3 checkboxes, the straightened image as Region and visualization mode to Grid.

For example, this transform results in a smooth displacement field, it is invertible in the visualized region:

If the slice size is increased then folding occurs:

Probably you can find a parameter set that works for a large group of patients. Maybe one parameter set works for all, but maybe you need to have a few different presets (small, medium, large)

Hi, can you explain how can i convert implant from ct to cpr?Thanks

You can transform the implant model from the original CT to the straightened image by applying the straightening transform to the implant model.

CT to CPR? What is CPR format, I haven’t heard of it before?

Thanks for the replay,but i cannot integrate the implant in to the Original CBCT